To turn on CDC, cdc_enabled must be set to true in the cassandra.yaml. It’s not much, but still contains useful information.

The entire documentation on Cassandra CDC can be found here. Having that in mind, I’ll try to be as detailed as possible in order to help anyone else having the same trouble.įirst of all, CDC is available from Cassandra 3.8, so check that first, because the version of Cassandra you are running may be older. My impression is that there is not much documentation on CDC, since I have struggled to grasp the concepts and how all of it should function. Infrastructure is also the same, two Cassandra 3.11.0 nodes, two Kafka 0.10.1.1 nodes, one Zookeeper 3.4.6 and everything packaged to run from Docker compose. ) WITH CLUSTERING ORDER BY (rating DESC, duration ASC) Infrastructure PRIMARY KEY ((genre, year), rating, duration) CREATE TABLE IF NOT EXISTS movies_by_genre ( In order to make easier comparisons later, I’ll use the same data model as in the first part. Here, I’ll try out Cassandra Change Data Capture (CDC), so let’s get started. What I have also tackled is the first step Have a mechanism to push each Cassandra change to Kafka with timestamp. But only one approach has been considered there - Cassandra triggers.

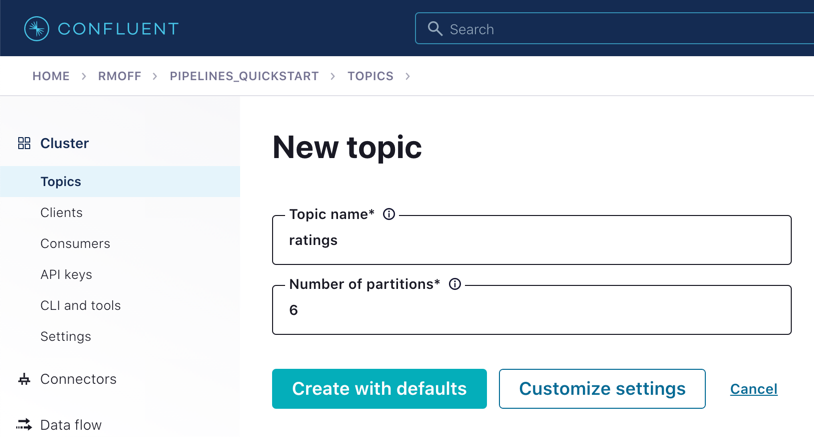

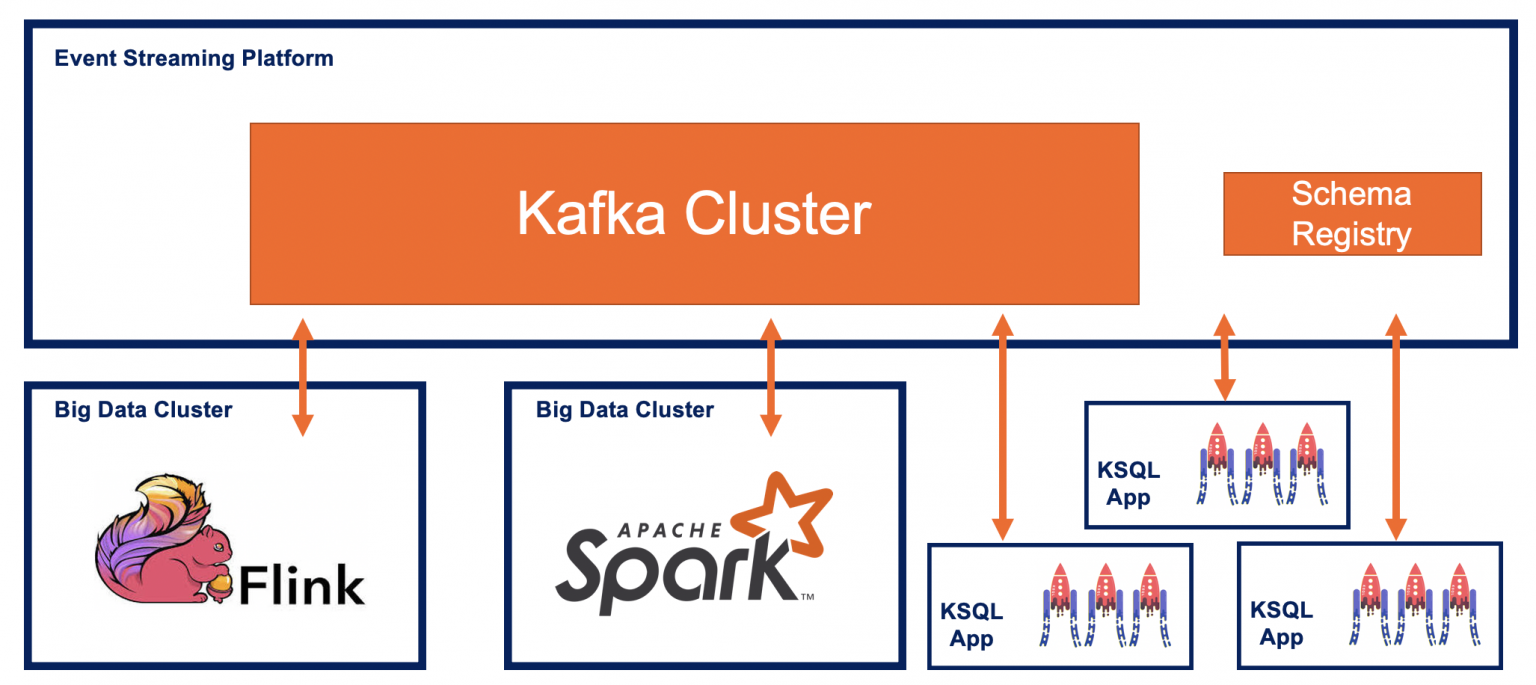

There, I have laid the necessary steps for injecting the Kafka cluster into system ‘before’ the Cassandra cluster. This writes 2000 records from the given schema to the purchases topic, using the Kafka broker at localhost:9092.If you haven’t read the previous part of this blog, you can find it here. Therefore we can simply pipe the output of the Mockaroo REST call directly into it: curl -s ""|\ When using kafkacat as a Producer you can do so interactively, feed it from flat files - or use stdin as the input. I’m using the most-excellent kafkacat ( about which you can read more here), which is a very simple-yet powerful-command line tool for producing data to and consuming data from Kafka. With the REST endpoint you can get any number of records, using curl: To do this you need to save your schema, and you need to register (for free) to do this. Mockaroo provides a REST endpoint from which you can pull the data for a given schema. Set the output to JSON (make sure it’s not as a JSON array). KSQL is the streaming SQL engine for Apache Kafka, and so as such I needed to get a bunch of test data into Kafka topics. Now I’m doing a bunch of work with KSQL, and want some useful test data with which to demonstrate certain queries and concepts. I’ve used Mockaroo many times over the years, often as a source for analytics visualisation tools that I’ve been working with. So you can build up realistic datasets at a few clicks of the mouse, and then export them to a bunch of formats, including CSV, JSON, and even SQL INSERT INTO statements (and, of course, it also provides the CREATE TABLE DDL!). Wanting to simulate some users? Here you go: What sets it apart from SELECT RANDOM(100) FROM DUMMY is that it has lots of different classes of test data for you to choose from. Mockaroo is a very cool online service that lets you quickly mock up test data. And in this post I get to show all three ? Three things I love…Kafka, kafkacat, and Mockaroo. Kafkacat -b localhost:9092 -t purchases -P Tl dr Use curl to pull data from the Mockaroo REST endpoint, and pipe it into kafkacat, thus: curl -s ""| \ In Kafka, Kafkacat, Mockaroo, Testing at

Quick ’n Easy Population of Realistic Test Data into Kafka

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed